Last week, Google debuted a technology it calls Duplex, which it touted as being capable of making phone calls on users’ behalf, booking appointments with businesses or making restaurant reservations. The stated idea was that this would save users precious time, but time to do what? Google’s preceding presentation, on technology that could autocomplete users’ emails, was based on the same premise, streamlining life’s banalities. But if virtual assistants could be so effective, it would seem to imply that our entire lives are so routine and our attitude toward them so indifferent that they may as well be autofilled. In the CEO Sundar Pichai’s demonstration of Duplex at the company’s I/O developer conference, the speaking bot was shown apparently comprehending a speaker in real time and even punctuated its interjections with humanoid “ums” and “ahs.” This touch suggested a clear intention of fooling the humans who would have to interact with the bot.

A Google spokeswoman later clarified that Google Assistant would self-disclose as a bot, but that was not at all evident in the presentation. Perhaps that kind of conversation, between a bot and the worker or small business owner required to attend to it, would have made the stakes too plain: how consumers could use Google’s technology to impose their contempt on those they expected to serve them. Instead the demo was framed as a feat of trickery: Look how well our bot pretends! though the subtext — Look how foolish the people on the phone seem! — remained palpable and was likely all the more seductive as a mere implication. The promise of Duplex is not its potential to save time, or streamline life’s banalities, but the power of fakeness itself, which has the quality of a weapon — something that imbues its user with a fearsome and alluring power.

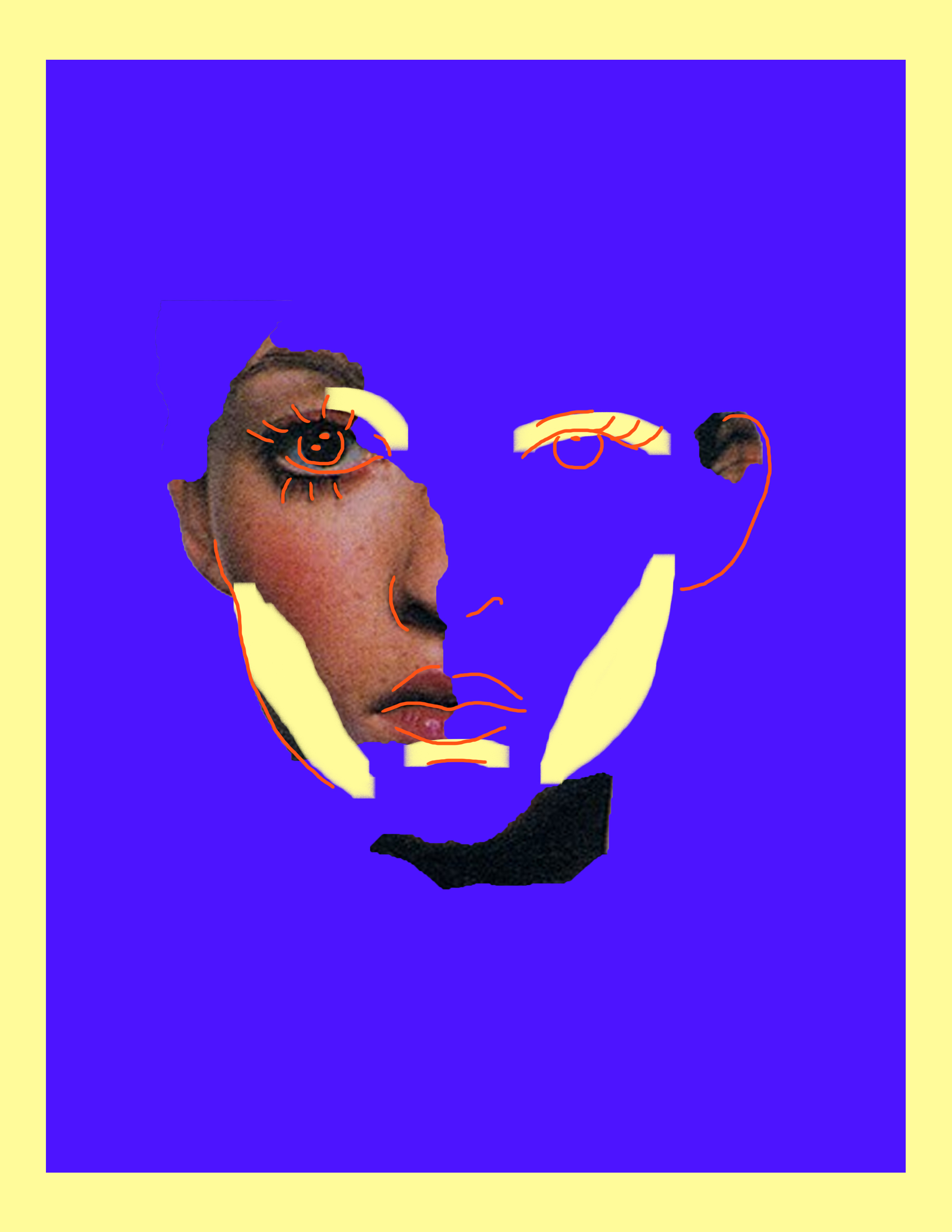

The concern with technological fakery and digital impersonation often involves this sort of subterfuge. Egregious forms of media manipulation — deepfakes, for instance — are held up for scrutiny, with questions of whether they are sufficiently convincing distracting from persistent underlying questions about the longstanding ruses of power. In “Body Doubles,” PJ Patella-Rey examines how deepfakes and other forms of nonconsensual pornography are typically framed as matters of information control, “which suggests that the body misappropriated in deepfakes and other images is just a kind of sensitive information, more like a password or a Social Security number than something more integral to the self.” Here, the medium for the fakery and the panic about the supposed loss of reality that alarmists take from it (cf. Franklin Foer in the Atlantic warning of “the arrival of a world beyond truth … where our eyes routinely deceive us,” as if that hasn’t always been the case) can distract from the personal nature of violation. The problem is not that the digital is easier to fake than the analog, but that society doesn’t regard the harms carried out through digital means, to the digitized extensions of ourselves, as seriously as other forms of harm.

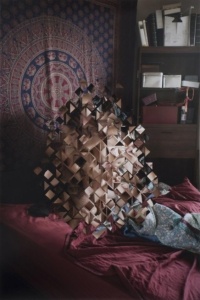

The ability and desire to persuade with false information is not a new or technology-based problem, but a problem inherent to human communication. News, for instance, is always subject to interpretation in its construction, delivery, and context — always potentially fake, from a particular point of view or with a particular intention in mind. As Adam Clair argues in “Negative Space,” the falsification of contextual information was, is, and will continue to be the conditions for inducing specific lies to be received as truths.

Drew Nelles in “Unreal News” looks at the changing fate of the Onion as the modes of fakery in conventional media have changed and different ironic strategies have been overtaken by reality. “The Onion imitates the news,” Nelles writes, while other outlets “go one step further, imitating parody to make fake news that seems real. One kind of fake news has passed the baton to another.” But “fake news” is deceptively hyperbolic — it disguises a much more insidious problem. The Onion’s parody bumps up against its vulnerability to advertisers and clicks, and we see it, throughout its history, reflecting the slanted catering of the media it mimics.

The cultural prominence of “fakes” warrants the spurious rejection of what we would prefer to disbelieve, as Donald Trump’s proclivities amply demonstrate. When Western media takes delight in North Korean propaganda that “fails” at Photoshop, they miss the seamlessness integration of its own propaganda, their complicity in making it, their gullibility in accepting it, and their similar cravenness in seeking to placate authority. The purging of enemies from Soviet images — fabricating them into Stalin’s lone portraits over time — foregrounds the crimes they represent despite, or perhaps because of, their alterations. But we should not make any medium the scapegoat for the abuses of power of those using it.

Featuring:

“Negative Space,” by Adam Clair

“Body Doubles,” by PJ Patella-Rey

Thank you for your consideration. Visit us next week for Real Life’s upcoming installment, SELF OPTIMIZATION.