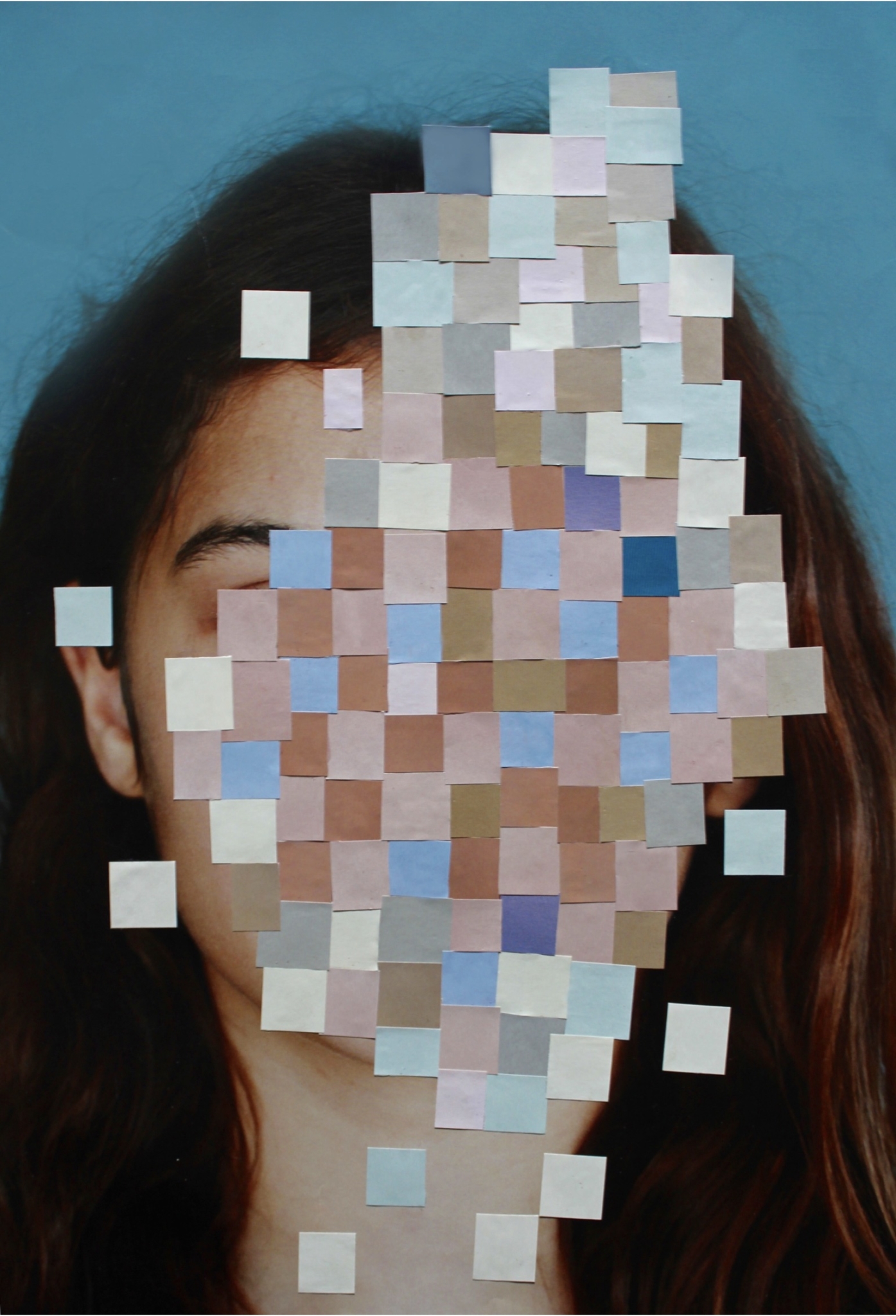

I open TikTok and make my way through its array of trending filters. One gives my face a peppering of light freckles, another transforms it into some sort of Halloween-themed pumpkin monster. After a bit of searching, I click the “Belle” filter, and my face changes once again. The contrast increases, reddening my cheeks and deepening the contours of my face. My skin smooths and my lashes elongate, and slight creases appear under my eyes.

I first came across this filter on my TikTok “For You” page. Initially I saw creators complaining about how unflattering it was, focusing on the “eye-bags” that the filter gave them as proof of its shabby design. After all, what sort of beauty filter would intentionally make you look tired? But other users, mostly of East Asian descent, championed the “Belle” filter as a sort of representational feat: these eye-bags weren’t really eye-bags, but aegyo-sal, little pouches of fat that many East Asians have under their eyes. According to this counter-narrative, the filter simply looked bad on the (generally white) creators complaining about it because it wasn’t made for them. As one creator put it, “welcome to the world of not automatically being the default.” This was, to them, a matter of representation: out of so many filters designed for white faces, it constituted a small rectification.

It takes a filter designed for people of color to make us realize the extent to which most filters aren’t

It was hard to deny that I looked good with the filter, better than I did with most. I was pretty, and what made me pretty wasn’t necessarily a narrower nose, or a smaller face (though some of the contouring did nudge those features in that direction), but features that I already had, amped up a notch. As I stared at my face, however, dolled up and looking more than a little unlike myself, I wondered what sort of representation this was.

When people talk about inclusive representation, they generally turn to the same topics: casting in movies, the narratives chosen to highlight, the presence of people of diverse backgrounds in high, public positions. But this overwhelming focus on the subjects of representation often causes us to ignore the tools of representation, which are neither objective nor inevitable. Like all popular technologies, their development is motivated by a variety of economic interests and swayed by assumptions and bias. It takes a filter like Belle, designed for people of color, to make us realize the extent to which most filters aren’t. These filters emerge from a long technological lineage that has always been rife with latent assumptions, competing interests, and economic motives. Understanding this context is critical.

If we’re to understand the extent to which our contemporary representational technologies have been moulded by bias, we have to go back to the advent of photography. As early as the 1840s, photographic technology and practice — from its processing technologies, to the mechanical components of camera equipment, to ways of lighting a subject — were impacted by the desire to accurately portray white skin. As Richard Dyer writes in White, a collection of essays analyzing representations of whiteness throughout history, once interest shifted from painted portraiture to photographic portraiture, “the issue of the ‘right’ technology (apparatus, consumables, practice) focused on the face and, given the clientele, the white face.”

Money was to be made in high fidelity representations of white people, and subsequent innovations followed the dollar. From the chemistry of film stock, to aperture size, to time spent in chemical baths, “all proceeded on the assumption that what had to be got right was the look of the white face.” As a result, images of darker skinned subjects would often appear washed out, nuances in tone and color gradients obliterated, such that these subjects appeared dramatically underexposed when placed next to their white counterparts.

These biases followed photography out of monochrome and into color. In the 1950s, the U.S. government acted against Kodak’s monopoly on the photo market: back then, Kodak not only sold almost all of the film in the U.S., but also the means of its development. Eventually, the government forced the company to take out the cost of film processing and printing from their products; as a consequence, Kodak had to figure out a way for independent film processors to develop their film stock. The company created a printer that could be sold to smaller photo labs, spawning the more accessible S5 printer line. For independent labs to calibrate these printers correctly, however, they needed an initial reference, so Kodak sent color prints and unexposed negatives to accompany the product. As Ray Demoulin, former head of Kodak’s photo tech division tells NPR, these references allowed these studios to “match their print with our print” when they processed their negatives.

Representation is not merely a matter of designing more filters to accentuate non-white features, but changing every stage of the developmental process

The prints sent by Kodak were referred to as “Shirley Cards”— so named because they were photos of Shirley Page, a studio model. Shirley was, of course, white, as was the procession of “Shirleys” that would follow her until the 1990s. In effect, this meant that a printer was set up “correctly” only to the extent that it produced an accurate likeness of white skin. Practices that could have, for example, produced better images of darker skin, were “technically” incorrect according to manufacturer specs. Even in color, images of people of color would remain inaccurate for decades.

To add insult to injury, Kodak’s investment in increasing the dynamic range of their film happened independently of a desire to accurately portray darker skin. As Lorna Roth’s interviews and research revealed, it was in large part pressure from chocolate makers and furniture companies that finally pushed Kodak to develop film stock that could capture the nuances of chocolate and wood grain (and, as an unintended consequence, black and brown skin). It’s an unsettling, but perhaps unsurprising fact that the desire to showcase objects in high fidelity came before the desire to showcase people of color with the same level of accuracy. Kodak’s Gold Max film was awkwardly advertised as “able to photograph the details of a dark horse in lowlight,” and would become the preferred stock among photographers concerned with representing non-white subjects.

Digital photography would remedy some of these challenges, and give photographers more autonomy over how images were shot and processed, but sensors and electronic camera equipment would still retain some bias to white skin. In photographer Syreeta McFadden’s words, “even today, in low light, the sensors search for something that is lightly colored or light skinned before the shutter is released. Focus it on a dark spot, and the camera is inactive. It only knows how to calibrate itself against lightness to define the image.” The default of whiteness casts a long shadow.

Commercial facial filter use is still fairly new among popular image making practices. Most recent histories trace it back to 2015, when Snapchat acquired the face modification app Looksery and used that technology to launch Lenses. This would serve as a sort of cultural progenitor for the thousands of filters now populating these platforms. Generally, they fall into a class known as “deformation” and “facial distortion” filters, so called because they allow users to change things like facial structure, nose shape, the size of their eyes. Today, nearly all are built off of a class of tools known as Facial Detection and Recognition Technologies (FDRTs).

FDRTs are technologies that allow programs to identify human faces, as well as the salient features of a face that filters can then mould. As Dr. David Leslie writes in a paper for the Alan Turing Institute, these tools were made possible by the advent of digital photography and the subsequent explosion of easily accessible images that came with the rise of the internet. Digital tools made photographs “computer-readable,” by turning them into arrays of numerical values. When learning models were provided with a sufficiently large dataset, they could be trained to recognize patterns in images from the “bottom-up.” Prior to this, facial recognition programming was “top-down” — rules for identifying faces, like “humans have two eyes,” had to be manually defined; a nearly impossible task given the sheer variation in human faces. Now, neural networks could be trained to identify features and patterns associated with a given class of images, and then apply this identification schema to instances outside of that set. This allowed them to be far more flexible, properly identifying human faces in a much wider range of contexts.

There is reason to be wary of replacing a monolithic standard of beauty with a more diverse, but still normative standard that profits an industry at the expense of mental health

Of course, just because these models were operating “independently” of more explicit human directive didn’t mean that the neural networks were immune to human bias. In 2009, an Asian American blogger complained that their Nikon camera would ask whether someone in their photo had blinked even though their eyes were clearly open. A similar case that same year noted how an HP computer’s facial tracking failed to identify a Black user, when it worked just fine for his white colleague. Nearly a decade later, Apple refunded a Chinese woman after her iPhone X’s facial unlock feature wasn’t able to distinguish her from her colleague.

Examples of bias go beyond anecdotal evidence. Research has demonstrated that facial recognition technologies perform differently across different groups. Microsoft’s FaceDetect, for instance, was shown to have a zero-percent error rate for white males, and a 20.8 percent error rate for dark-skinned females. In part, this has to do with the datasets that these algorithms are trained on. As Dr. Leslie writes, “the most widely used of these datasets have historically come from internet-scraping at scale rather than deliberate, bias-aware methods of data collection.” As a result, representational differences within datasets are commonplace. One IBM study noted, for example, that six out of the eight most prominent datasets consisted of over 80 percent light-skinned faces.

The “whiteness as default” paradigm critiqued by TikTokers goes far beyond filter design and execution, into the technological architecture of those filters. Part of why people of color might look “weird” on a given filter, or why a filter might not work on them at all, isn’t simply because the filter wasn’t designed with them in mind — it’s because the technologies upon which the filter was built weren’t designed to properly recognize non-white faces and features. It’s clear, then, that representation is not merely a matter of designing more filters to accentuate non-white features. Rather, it’s a question at every stage of the developmental process.

The common solution suggested, for the whiteness of filters and their underlying technologies, is plurality: We need more models of beauty to aspire to, more filters that show more ways to be beautiful, facial recognition technologies that work equally well for everyone. As Jennifer Li writes in InStyle, “the Belle filter… is such a revelation because it proves there is no singular definition of beauty. The Belle filter is a tool that social media users can use to connect more closely to their heritage and to embrace the ways that they naturally don’t conform to the white status quo. And that’s a beautiful thing.” For Li and others like her, the filter is an example of how we might achieve a robust and diverse ecosystem of visual technologies.

But behind these issues of execution lies a fundamental question: Is this sort of equity worth striving for? Is this new class of representational technologies something good in the first place? It’s easy to be fatalistic in matters of technological production and dissemination — to focus primarily on making the best out of a situation, rather than imagining beyond it.

We have reason to be wary of replacing a monolithic standard of beauty with a more diverse, but still normative standard. Gendered differences in filter perceptions and usage have been observed in children as young as 10 years old, with younger boys generally using filters solely for play, and younger girls using filters to modify their appearance to take away physical “imperfections.” Having a wider array of filters may address certain racialized pressures, but they wouldn’t touch the wider pressures created by a patriarchal industry that profits at the expense of mental health. While Li may find the “Belle” filter a way to harmlessly connect with one’s heritage, others have lamented the fact that they don’t look like that in real life.

In recent years, several start-ups using many of the same facial recognition technologies that underpin these filters have cropped up with the promise of helping you identify your strengths and weaknesses by rating your face (at a price). One such start-up, Qoves, even uses this analysis to suggest surgeries to correct the imperfections it identifies. The co-founder of Qoves told an interviewer that “you can escape this Eurocentric bias just by becoming the best-looking version of yourself, the best-looking version of your ethnicity, the best-looking version of your race.” Notably, Qoves leans into the concept of racial equality in its branding, highlighting how its computer vision technologies provide an avenue for people of color to escape bias; their “Machine Approach,” for example, has purportedly allowed them to “develop tools to tackle racial beauty biases in AI.” This is achieved in part by training their algorithms on “diverse” datasets populated by computer-generated faces of people of different races, not by images of actual people of color.

These facial recognition tools could intersect with a dangerous ideology of “race science”

That makes it all the more concerning when Qoves, and companies like it, appeal to scientific objectivity — a concept so often deployed to justify and institutionalize bias — to defend their service. It’s a narrative we’ve seen before. These neural network systems are largely black boxes that “regurgitate the preferences of the training data used to teach it”; and these companies have a financial interest in keeping the mechanics of these technologies close to their chest. What’s taken for granted is the idea that there is a better: that your looks are flawed as they are, and that “science” — proprietary technologies, more accurately — can fix that.

Creating more of these filters, and making facial recognition technologies more effective also hones these tools so that they can be used elsewhere, in domains where the potential repercussions are clearly negative. This includes commercial uses, where companies leverage these technologies to find new audiences and spark new demands — with eye tracking tools that promise to deliver “shopper insights” or tailor advertising to your mood based on facial detection — as well as more disturbing uses in surveillance and control systems, with the potential for mass implementation that could be particularly challenging to reverse.

It was recently revealed, for example, that Huawei had tested facial recognition software that could be used to identify Uighurs as part of the Chinese government’s devastating campaign of oppression against this ethnic minority. In a perverse inversion of the historical development of facial detection and recognition technologies, these technologies were explicitly designed to work well on members of a given minority group, with the ultimate end of controlling that population. Additional papers revealed that other researchers had tested technologies meant to differentiate between ethnically Korean, Tibetan, and Uighur faces. This shows how these tools could intersect with a dangerous ideology of “race science” by framing these technologies as capable of identifying essentialized differences between different ethnic groups. Given that the popularity of debunked race science is already on the rise, this should be cause for particular concern.

The panoptic potential of facial recognition technology is being leveraged in police forces and private security forces all over the globe, and extensively in the U.S. at both the federal and local levels. Ineffectual surveillance systems that have a hard time telling non-white subjects apart incorrectly identify people of color as threats or criminals at higher rates than their white counterparts. And the use of sentiment analysis by everything from companies identifying personality fit during interviews to law enforcement flagging “suspicious behavior” easily intersects with racialized attitudes towards emotional expression: AIs have been shown to assign Black men negative emotions at far higher rates than their white counterparts. Bias can creep in at all levels of these systems, which have already been linked to an increase in self-censorship, including a drop in willingness to engage in protest. Designing more effective, and equally distributed facial recognition technologies creates room for a new kind of oppression based on heightened surveillance. As long as these tools have a place in the arsenal of state power, they will cause harm.

More and more powerful technologies, then, don’t pose a solution — rather, what’s needed is a radical rethinking of what kinds of tools belong in the hands of our police and governments in the first place, and mobilizing for reform.

Looking back now, it may seem obvious that these representational technologies were moulded by bias and assumptions. But the faith in science as objective and empirical persists, and is echoed in the claims of neutrality coming from Silicon Valley today. Insofar as innovation builds on what came before, understanding the original sins upon which modern tools were forged helps identify potential weaknesses. Computer vision has positive potential, of course: in domains like medicine, for instance, where its capabilities for pattern recognition outstrip our own perceptual abilities. Technology is being developed that may change the ways in which melanoma is diagnosed, for example, with these programs showing similar sensitivities to dermatologists’ diagnoses. This makes it all the more important to get this technology right for people of all skin tones, lest we put certain groups at greater risk.

As I look at myself with the Belle filter, the image shown to me is the product of a long procession of technologies that goes back to the advent of photography, through the invention of color photos and the transition of analog to digital photography; and, finally, up through computer vision and facial recognition models. Its close relatives include surveillance apparatuses, plastic surgery consultation tools, and medical technologies capable of saving lives. We may not be able to see all of this in the image, but it’s there. Many tools have preceded the ones we have today, and many more will replace them. Understanding our history, as well as the wider world into which these technologies will be born, is necessary to ensure better tools of sight.